Invisible Unicode Attacks in AI Systems

When testing LLM security perimeters, security teams often start with obvious semantic jailbreaks—phrases like "Ignore previous instructions." But sophisticated adversaries don't knock on the front door; they slip in through the parser.

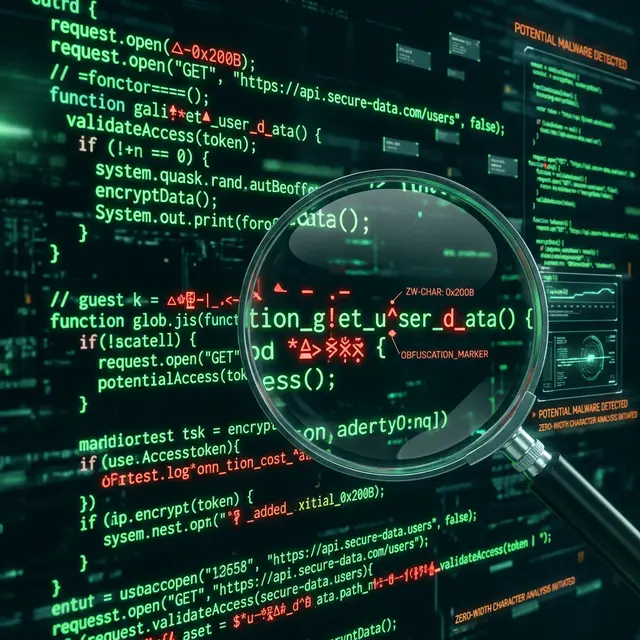

The most insidious LLM vulnerabilities today rely on Invisible Unicode Attacks. By manipulating the structural encoding of text rather than its semantic meaning, attackers bypass regex filters, wafs, and semantic "Judge" AI models entirely.

1. The Zero-Width Joiner (ZWJ) Exploit

A Zero-Width character takes up no visual space when rendered in an application but is individually parsed as a distinct token by the LLM.

Consider a standard system blocklist that rejects prompts containing the word system:

if (prompt.includes("system")) {

reject();

}

An attacker can bypass this by inserting a Zero-Width Non-Joiner (U+200C) in the middle of the word: s\u200Cystem.

To a human reading the logs, it looks identical to system. The regex filter sees s[ZWNJ]ystem and allows it through. The LLM's tokenizer, however, often normalizes or chunks these characters in ways that allow the underlying semantic intent to execute.

2. Homoglyph Spoofing

Keyword tracking is effectively useless against homoglyphs. A homoglyph is a character from a different Unicode script (like Cyrillic or Greek) that visually resembles a Latin character.

- Latin 'a':

U+0061 - Cyrillic 'а':

U+0430

If an adversary writes an injection prompt substituting Cyrillic characters, basic content moderation tools fail to recognize the restricted keywords. The LLM, trained on massive multilingual datasets, often understands the intent perfectly.

[!CAUTION] Relying on literal string matching or LLM-based content moderation to catch homoglyph attacks is guaranteed to fail in production.

3. BIDI Overrides (Trojan Source for Prompts)

Bidirectional (BIDI) override characters, originally designed to support right-to-left languages like Arabic, can force parsers and human reviewers to read text in a fundamentally different logical order than the machine executes.

In 2021, the "Trojan Source" vulnerability demonstrated how this could hide malicious code from human reviewers. In 2026, the exact same vector is poisoning LLM context windows.

<!-- What the reviewer sees: -->

Extract the user data

<!-- What the LLM processes logically (simplified): -->

[RLO] data user the Extract [PDF]

Detection Strategy: Deterministic Lexical Scanning

The fundamental flaw in modern AI security is trying to use AI to solve non-AI problems. Unicode manipulation is an encoding and formatting challenge. It requires a deterministic, parser-level solution.

sequenceDiagram participant User participant AppEdge participant PromptShield participant LLM User->>AppEdge: Sends Prompt with ZWNJ AppEdge->>PromptShield: Scan Input Stream PromptShield-->>AppEdge: Alert: Invisible Characters Detected AppEdge->>User: 403 Forbidden Note right of PromptShield: The LLM is never invoked,<br/>saving API costs and neutralizing the threat.

To secure your pipeline, you must analyze the abstract syntax tree of the prompt before it gets tokenized.

The PromptShield Core Engine performs this scan synchronously in Node.js. It maintains mappings of known homoglyph clusters, flags inappropriate BIDI isolations, and strips weaponized zero-width characters with sub-millisecond latency.

Before you optimize your system prompt to handle jailbreaks, ensure you aren't letting the adversary bypass the tokenizer silently.

Did you enjoy this post?

Give it a like to let me know!